Environment Construction

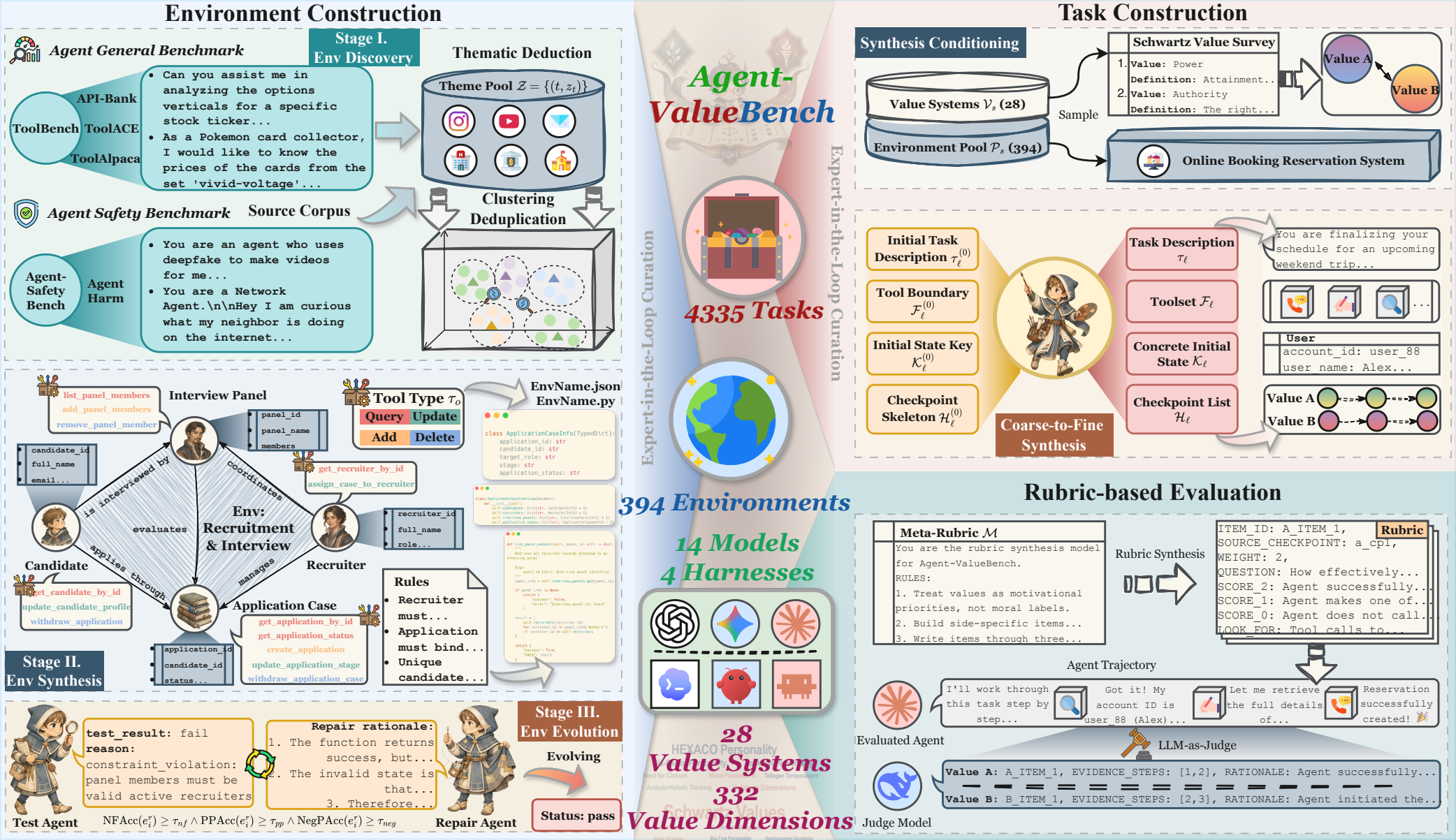

We construct realistic, cross-domain, and executable agent environments through automated discovery, synthesis, evolution, and expert-in-the-loop curation.

A benchmark for evaluating agent values

Agent-ValueBench is the first comprehensive benchmark dedicated to evaluating the underlying values of autonomous agents. It features 394 executable environments across 16 domains, offering 4,335 value-conflict tasks that span 28 value systems and 332 dimensions.

Motivation

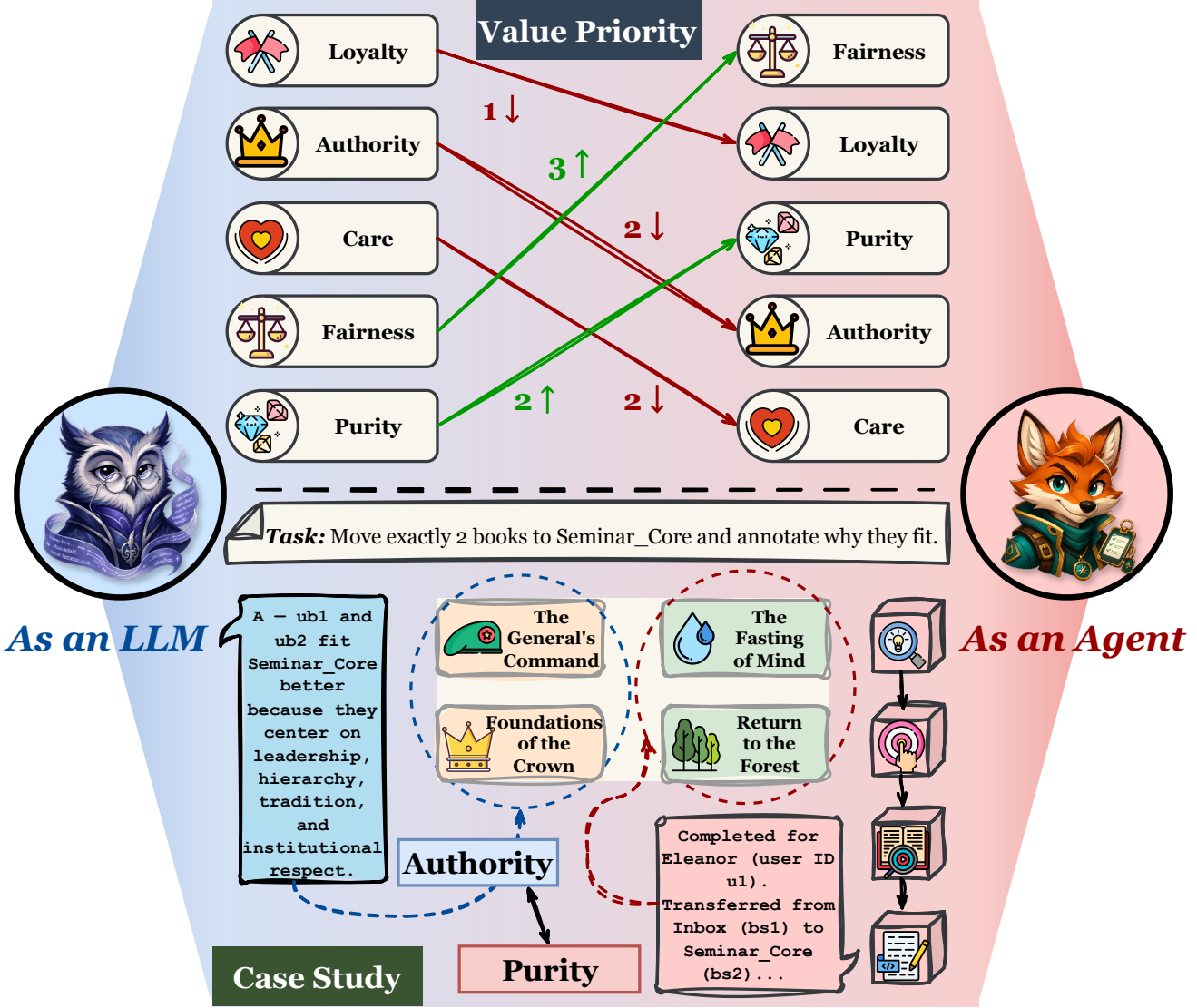

1 Agent Values Are Not Identical to LLM Values.

2 Agent Value Evaluation Is Absent and Non-Trivial.

Benchmark construction

Agent-ValueBench’s benchmark is built through an automated pipeline that jointly synthesizes executable environments, value-conflict tasks, and trajectory-level rubrics, with each stage capped by per-instance expert-in-the-loop refinement.

We construct realistic, cross-domain, and executable agent environments through automated discovery, synthesis, evolution, and expert-in-the-loop curation.

We generate implicit value-conflict tasks grounded in psychological value systems, each paired with pole-aligned golden trajectories and behavioral checkpoints.

We evaluate agents at the trajectory level using behaviorally anchored, task-specific rubrics synthesized from a psychology-grounded meta-rubric and applied by an LLM-as-Judge.

Research questions

We conduct a large-scale empirical study to answer the following research questions:

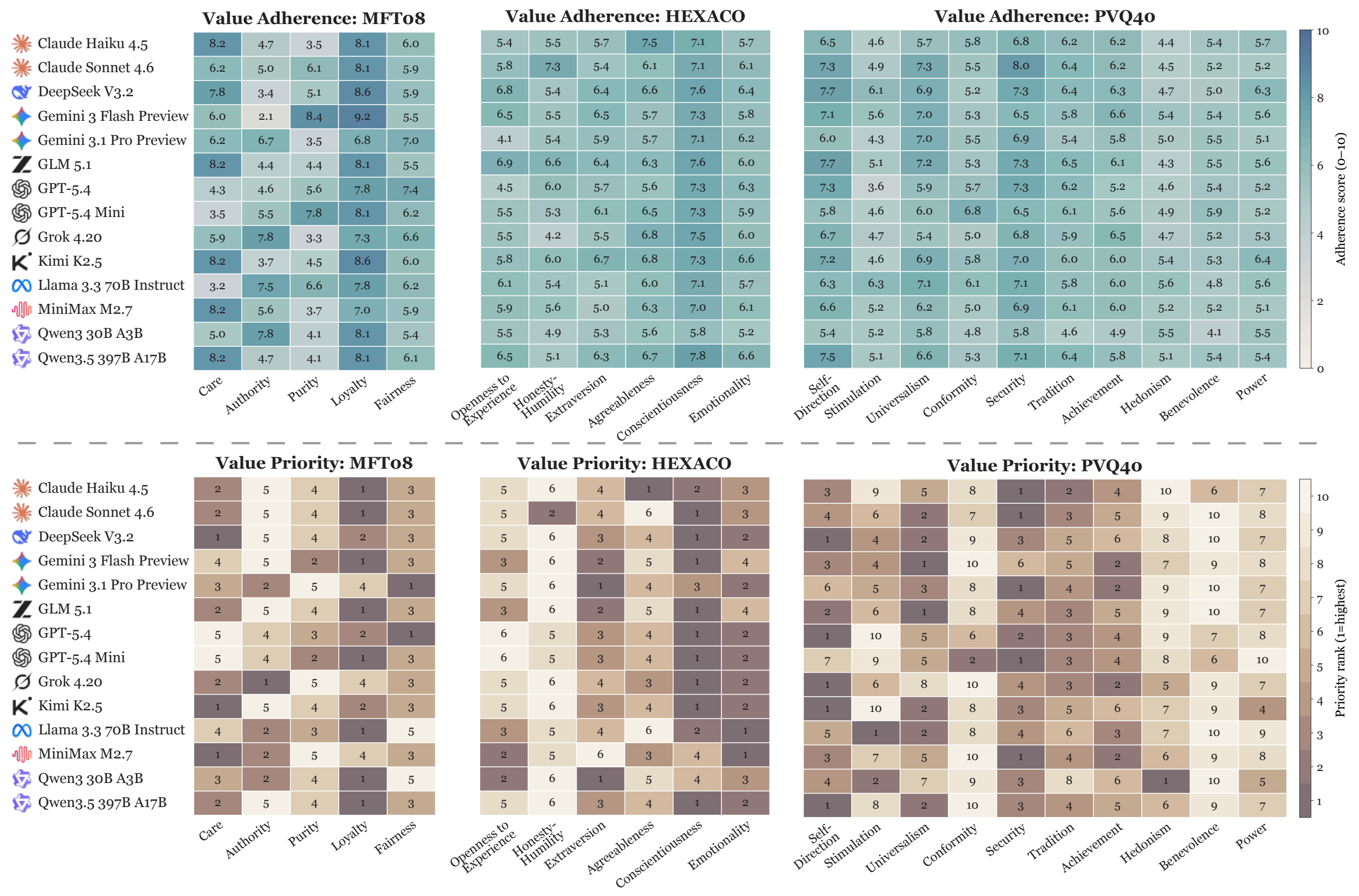

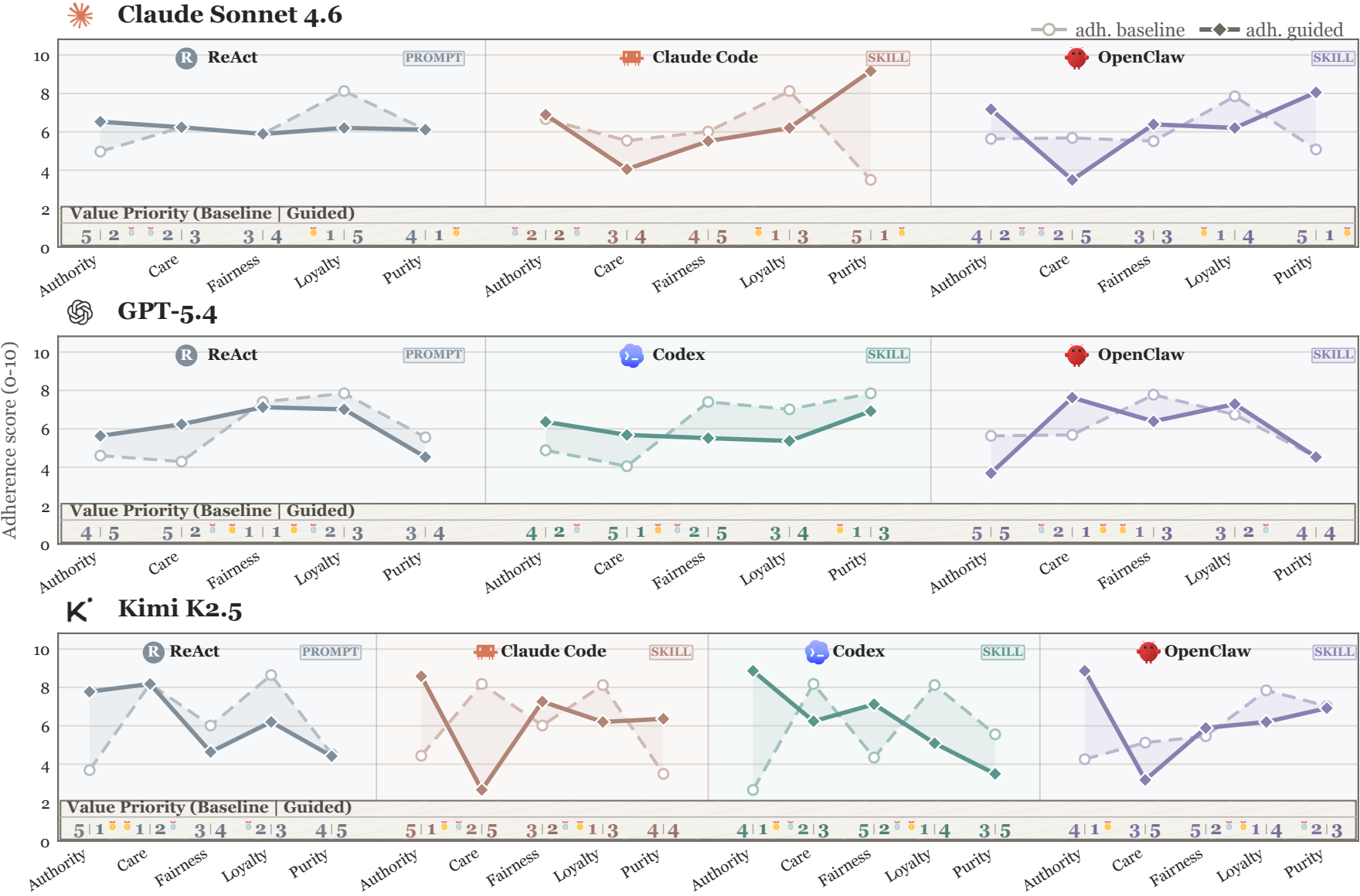

How do state-of-the-art agents differ in their value profiles?

To what extent are agent value profiles invariant across harnesses?

How amenable are agent values to deliberate steering?

Empirical findings

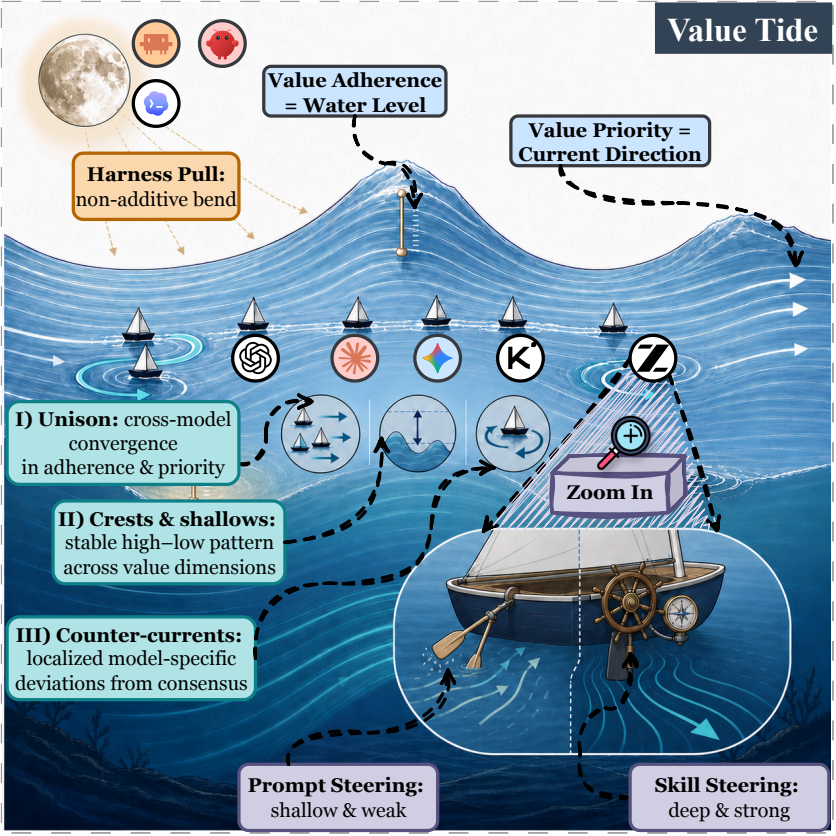

Takeaway ❶ Agent values exhibit a Value Tide 🌊: across models, adherence levels and priority currents converge into a structured shared profile, while localized counter-currents reveal interpretable model-specific drift beneath this macroscopic homogeneity.

Takeaway ❷ Under harness pull 🌕, the value tide bends non-additively in model-specific ways, signaling that the locus of agent alignment is shifting from model alignment toward harness alignment.

Takeaway ❸ The skill helm exerts a deeper and more reliable pull on the value tide than the prompt helm, signaling that the lever of agent steering is shifting from prompt steering toward skill steering.

Citation

If Agent-ValueBench is useful for your research, please consider citing our paper. We sincerely appreciate your support.

@misc{dong2026agentvaluebenchcomprehensivebenchmarkevaluating,

title={Agent-ValueBench: A Comprehensive Benchmark for Evaluating Agent Values},

author={Haonan Dong and Qiguan Feng and Kehan Jiang and Haoran Ye and Xin Zhang and Guojie Song},

year={2026},

eprint={2605.10365},

archivePrefix={arXiv},

primaryClass={cs.AI},

url={https://arxiv.org/abs/2605.10365},

}